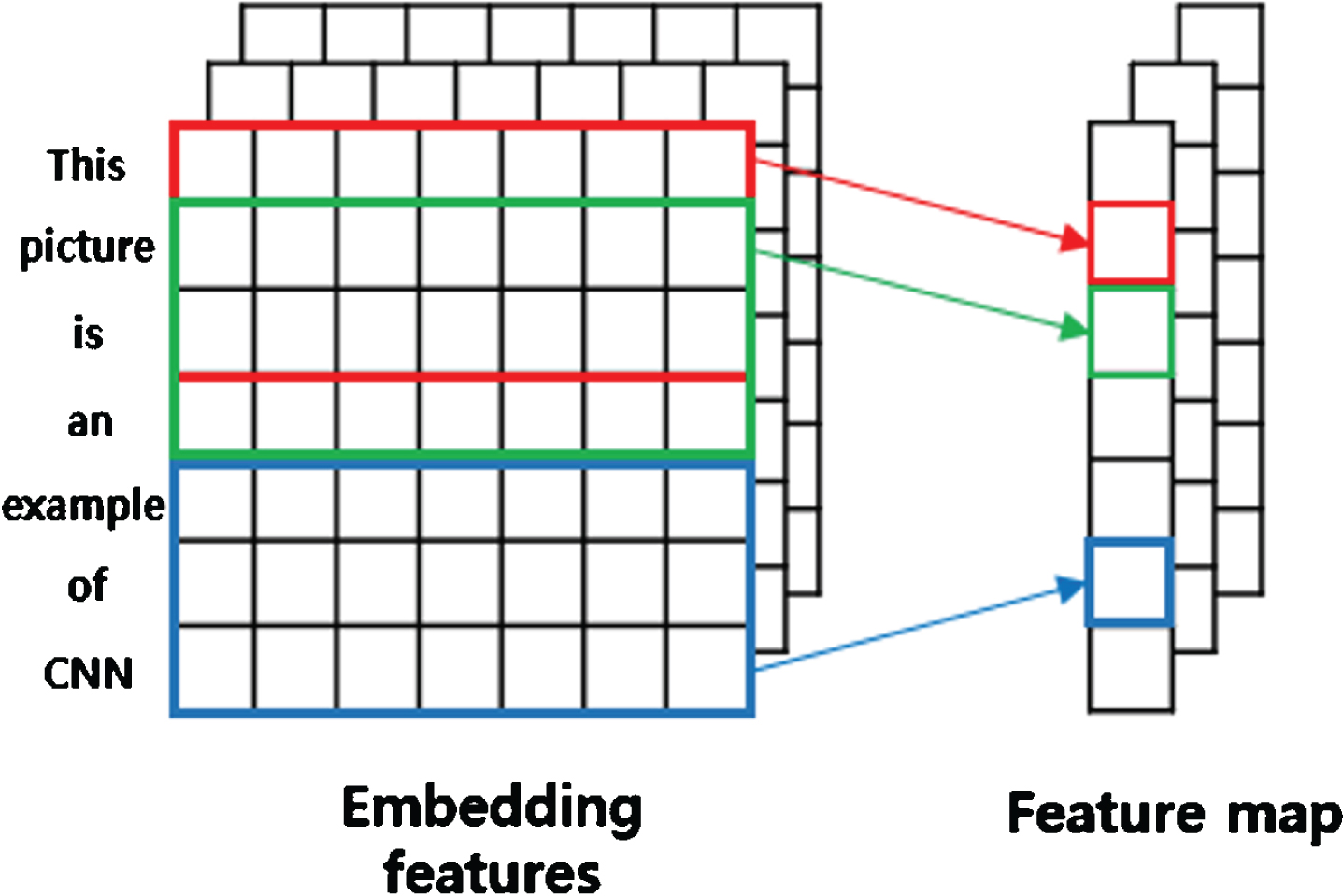

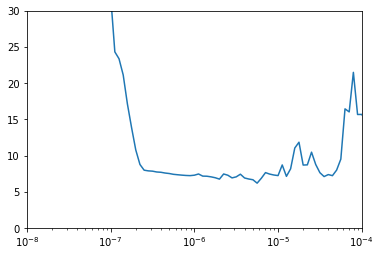

This is done to enhance the learning of the model. When the neurons are switched off the incoming and outgoing connection to those neurons is also switched off. Dropouts are added to randomly switching some percentage of neurons of the network. What are Dropouts? Where are they used?ĭropouts are the regularization technique that is used to prevent overfitting in the model.Model.add(Dense(units = 10, activation = 'softmax')) Model.add(Dense(units = 32, activation = 'relu')) Model.add(Dense(units = 64, activation = 'relu')) Model.add(Dense(units=128,activation = 'relu')) X_test=X_test.reshape(len(X_test),28,28,1)įrom keras.layers import BatchNormalization Now we will reshape the training and testing image and will then define the CNN network. There are a total of 60,000 images in the training and 10,000 images in the testing data. We will first import the required libraries and the dataset. The below code shows how to define the BatchNormalization layer for the classification of handwritten digits. It is often placed just after defining the sequential model and after the convolution and pooling layers. It can be used at several points in between the layers of the model. The layer is added to the sequential model to standardize the input or the outputs. Using batch normalization learning becomes efficient also it can be used as regularization to avoid overfitting of the model. The activations scale the input layer in normalization. It is used to normalize the output of the previous layers. The below image shows an example of the CNN network.īatch normalization is a layer that allows every layer of the network to do learning more independently. Also, the network comprises more such layers like dropouts and dense layers. There are again different types of pooling layers that are max pooling and average pooling layers. Then there come pooling layers that reduce these dimensions. What are Dropouts? Where are they added?Ĭonvolution neural network (CNN’s) is a deep learning algorithm that consists of convolution layers that are responsible for extracting features maps from the image using different numbers of kernels.

What is BatchNormalization? Where is it used?.The data set can be loaded from the Keras site or else it is also publicly available on Kaggle. For this article, we have used the benchmark MNIST dataset that consists of Handwritten images of digits from 0-9. Through this article, we will be exploring Dropout and BatchNormalization, and after which layer we should add them. But there is a lot of confusion people face about after which layer they should use the Dropout and BatchNormalization. Also, we add batch normalization and dropout layers to avoid the model to get overfitted. In Computer vision while we build Convolution neural networks for different image related problems like Image Classification, Image segmentation, etc we often define a network that comprises different layers that include different convent layers, pooling layers, dense layers, etc.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed